Project: safeai.ethz.ch

Publications

2026

Expressiveness of Multi-Neuron Convex Relaxations in Neural Network Certification

Yuhao Mao*, Yani Zhang*, Martin Vechev

ICLR

2026

* Equal contribution

Dual Randomized Smoothing: Beyond Global Noise Variance

Chenhao Sun, Yuhao Mao, Martin Vechev

ICLR

2026

2025

CTBENCH: A Library and Benchmark for Certified Training

Yuhao Mao, Stefan Balauca, Martin Vechev

ICML

2025

Average Certified Radius is a Poor Metric for Randomized Smoothing

Chenhao Sun*, Yuhao Mao*, Mark Niklas Müller, Martin Vechev

ICML

2025

* Equal contribution

Gaussian Loss Smoothing Enables Certified Training with Tight Convex Relaxations

Stefan Balauca, Mark Niklas Müller, Yuhao Mao, Maximilian Baader, Marc Fischer, Martin Vechev

TMLR

2025

Certification for Differentially Private Prediction in Gradient-Based Training

Matthew Robert Wicker, Philip Sosnin, Igor Shilov, Adrianna Janik, Mark Niklas Müller, Yves-Alexandre de Montjoye, Adrian Weller, Calvin Tsay

ICML

2025

2024

Back to the Drawing Board for Fair Representation Learning

Angéline Pouget, Nikola Jovanović, Mark Vero, Robin Staab, Martin Vechev

arXiv

2024

Understanding Certified Training with Interval Bound Propagation

Yuhao Mao, Mark Niklas Müller, Marc Fischer, Martin Vechev

ICLR

2024

Expressivity of ReLU-Networks under Convex Relaxations

Maximilian Baader*, Mark Niklas Müller*, Yuhao Mao, Martin Vechev

ICLR

2024

* Equal contribution

2023

Connecting Certified and Adversarial Training

Yuhao Mao, Mark Niklas Müller, Marc Fischer, Martin Vechev

NeuIPS

2023

FARE: Provably Fair Representation Learning with Practical Certificates

Nikola Jovanović, Mislav Balunović, Dimitar I. Dimitrov, Martin Vechev

ICML

2023

Abstract Interpretation of Fixpoint Iterators with Applications to Neural Networks

Mark Niklas Müller, Marc Fischer, Robin Staab, Martin Vechev

PLDI

2023

Efficient Certified Training and Robustness Verification of Neural ODEs

Mustafa Zeqiri, Mark Niklas Müller, Marc Fischer, Martin Vechev

ICLR

2023

Certified Training: Small Boxes are All You Need

Mark Niklas Müller*, Franziska Eckert*, Marc Fischer, Martin Vechev

ICLR

2023

* Equal contribution

Spotlight

Spotlight

Spotlight

Spotlight

Human-Guided Fair Classification for Natural Language Processing

Florian E. Dorner, Momchil Peychev, Nikola Konstantinov, Naman Goel, Elliott Ash, Martin Vechev

ICLR

2023

Spotlight

Spotlight

Spotlight

Spotlight

First Three Years of the International Verification of Neural Networks Competition (VNN-COMP)

Christopher Brix, Mark Niklas Müller, Stanley Bak, Changliu Liu, Taylor T. Johnson

STTT ExPLAIn

2023

2022

The Third International Verification of Neural Networks Competition (VNN-COMP 2022): Summary and Results

Mark Niklas Müller*, Christopher Brix*, Stanley Bak, Changliu Liu, Taylor T. Johnson

arXiv

2022

* Equal contribution

(De-)Randomized Smoothing for Decision Stump Ensembles

Miklós Z. Horváth*, Mark Niklas Müller*, Marc Fischer, Martin Vechev

NeurIPS

2022

* Equal contribution

Private and Reliable Neural Network Inference

Nikola Jovanović, Marc Fischer, Samuel Steffen, Martin Vechev

ACM CCS

2022

Latent Space Smoothing for Individually Fair Representations

Momchil Peychev, Anian Ruoss, Mislav Balunović, Maximilian Baader, Martin Vechev

ECCV

2022

On the Paradox of Certified Training

Nikola Jovanović*, Mislav Balunović*, Maximilian Baader, Martin Vechev

TMLR

2022

* Equal contribution

Shared Certificates for Neural Network Verification

Marc Fischer*, Christian Sprecher*, Dimitar I. Dimitrov, Gagandeep Singh, Martin Vechev

CAV

2022

* Equal contribution

Robust and Accurate - Compositional Architectures for Randomized Smoothing

Miklós Z. Horváth, Mark Niklas Müller, Marc Fischer, Martin Vechev

SRML@ICLR

2022

Boosting Randomized Smoothing with Variance Reduced Classifiers

Miklós Z. Horváth, Mark Niklas Müller, Marc Fischer, Martin Vechev

ICLR

2022

Spotlight

Spotlight

Spotlight

Spotlight

Complete Verification via Multi-Neuron Relaxation Guided Branch-and-Bound

Claudio Ferrari, Mark Niklas Müller, Nikola Jovanović, Martin Vechev

ICLR

2022

Provably Robust Adversarial Examples

Dimitar I. Dimitrov, Gagandeep Singh, Timon Gehr, Martin Vechev

ICLR

2022

PRIMA: General and Precise Neural Network Certification via Scalable Convex Hull Approximations

Mark Niklas Müller*, Gleb Makarchuk*, Gagandeep Singh, Markus Püschel, Martin Vechev

POPL

2022

* Equal contribution

2021

Automated Discovery of Adaptive Attacks on Adversarial Defenses

Chengyuan Yao, Pavol Bielik, Petar Tsankov, Martin Vechev

NeurIPS

2021

Robustness Certification for Point Cloud Models

Tobias Lorenz, Anian Ruoss, Mislav Balunović, Gagandeep Singh, Martin Vechev

ICCV

2021

Scalable Polyhedral Verification of Recurrent Neural Networks

Wonryong Ryou, Jiayu Chen, Mislav Balunović, Gagandeep Singh, Andrei Dan, Martin Vechev

CAV

2021

Scalable Certified Segmentation via Randomized Smoothing

Marc Fischer, Maximilian Baader, Martin Vechev

ICML

2021

Automated Discovery of Adaptive Attacks on Adversarial Defenses

Chengyuan Yao, Pavol Bielik, Petar Tsankov, Martin Vechev

AutoML@ICML

2021

Oral

Oral

Oral

Oral

Fast and Precise Certification of Transformers

Gregory Bonaert, Dimitar I. Dimitrov, Maximilian Baader, Martin Vechev

PLDI

2021

Certify or Predict: Boosting Certified Robustness with Compositional Architectures

Mark Niklas Müller, Mislav Balunović, Martin Vechev

ICLR

2021

Scaling Polyhedral Neural Network Verification on GPUs

Christoph Müller*, François Serre*, Gagandeep Singh, Markus Püschel, Martin Vechev

MLSys

2021

* Equal contribution

Robustness Certification with Generative Models

Matthew Mirman, Alexander Hägele, Timon Gehr, Pavol Bielik, Martin Vechev

PLDI

2021

Efficient Certification of Spatial Robustness

Anian Ruoss, Maximilian Baader, Mislav Balunović, Martin Vechev

AAAI

2021

2020

Learning Certified Individually Fair Representations

Anian Ruoss, Mislav Balunović, Marc Fischer, Martin Vechev

NeurIPS

2020

Certified Defense to Image Transformations via Randomized Smoothing

Marc Fischer, Maximilian Baader, Martin Vechev

NeurIPS

2020

Adversarial Attacks on Probabilistic Autoregressive Forecasting Models

Raphaël Dang-Nhu, Gagandeep Singh, Pavol Bielik, Martin Vechev

ICML

2020

Adversarial Training and Provable Defenses: Bridging the Gap

Mislav Balunović, Martin Vechev

ICLR

2020

Oral

Oral

Oral

Oral

Universal Approximation with Certified Networks

Maximilian Baader, Matthew Mirman, Martin Vechev

ICLR

2020

2019

Beyond the Single Neuron Convex Barrier for Neural Network Certification

Gagandeep Singh, Rupanshu Ganvir, Markus Püschel, Martin Vechev

NeurIPS

2019

Certifying Geometric Robustness of Neural Networks

Mislav Balunović, Maximilian Baader, Gagandeep Singh, Timon Gehr, Martin Vechev

NeurIPS

2019

Online Robustness Training for Deep Reinforcement Learning

Marc Fischer, Matthew Mirman, Steven Stalder, Martin Vechev

arXiv

2019

DL2: Training and Querying Neural Networks with Logic

Marc Fischer, Mislav Balunović, Dana Drachsler-Cohen, Timon Gehr, Ce Zhang, Martin Vechev

ICML

2019

Boosting Robustness Certification of Neural Networks

Gagandeep Singh, Timon Gehr, Markus Püschel, Martin Vechev

ICLR

2019

A Provable Defense for Deep Residual Networks

Matthew Mirman, Gagandeep Singh, Martin Vechev

arXiv

2019

An Abstract Domain for Certifying Neural Networks

Gagandeep Singh, Timon Gehr, Markus Püschel, Martin Vechev

ACM POPL

2019

2018

Fast and Effective Robustness Certification

Gagandeep Singh, Timon Gehr, Matthew Mirman, Markus Püschel, Martin Vechev

NIPS

2018

Differentiable Abstract Interpretation for Provably Robust Neural Networks

Matthew Mirman, Timon Gehr, Martin Vechev

ICML

2018

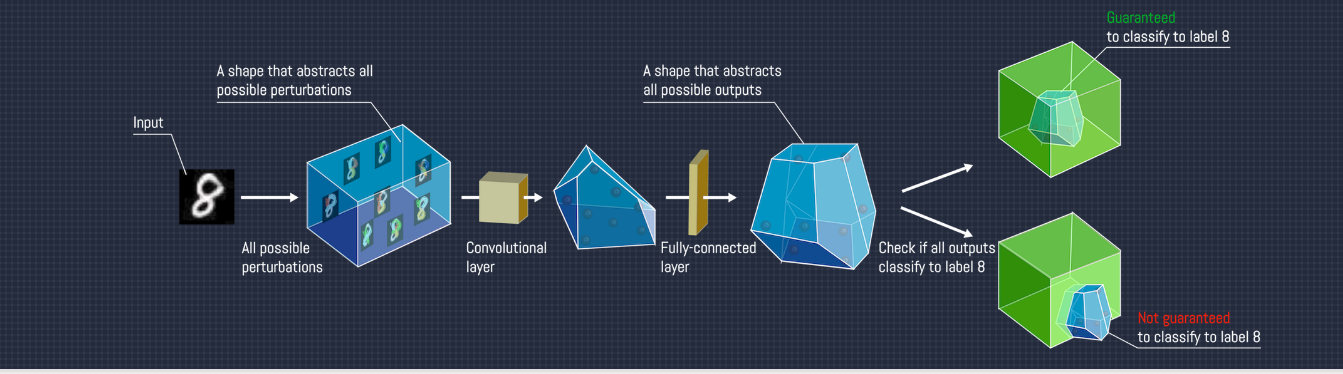

AI2: Safety and Robustness Certification of Neural Networks with Abstract Interpretation

Timon Gehr, Matthew Mirman, Dana Drachsler-Cohen, Petar Tsankov, Swarat Chaudhuri, Martin Vechev

IEEE S&P

2018

Talks

Safe and Robust Deep Learning

Waterloo ML + Security + Verification Workshop

Safe and Robust Deep Learning

University of Edinburgh, Robust Artificial Intelligence for Neurorobotics 2019

AI2: AI Safety and Robustness with Abstract Interpretation

Machine Learning meets Formal Methods, FLOC 2018